The chi-square (CS) goodness-of-fit test is applied to binned data (i.e., data put into classes), and an attractive feature of the CS test is that it can be applied to any univariate distribution for which you can calculate the cumulative distribution function. However, the values of the CS test statistic are dependent on how the data is binned, and the test requires a sufficient sample size in order for the CS approximation to be valid. This test is sensitive to the choice of bins. The test can be applied to discrete distributions such as the binomial and the Poisson, while the KS test is restricted to continuous distributions.

The null hypothesis is such that the dataset follows a specified distribution, while the alternate hypothesis is that the dataset does not follow the specified distribution. The hypothesis is tested using the CS statistic defined as ![]() where Oi is the observed frequency for bin i and Ei is the expected frequency for bin i. The expected frequency is calculated by

where Oi is the observed frequency for bin i and Ei is the expected frequency for bin i. The expected frequency is calculated by ![]() where F is the cumulative distribution function for the distribution being tested, YU is the upper limit for class i, YL is the lower limit for class i, and N is the sample size.

where F is the cumulative distribution function for the distribution being tested, YU is the upper limit for class i, YL is the lower limit for class i, and N is the sample size.

The test statistic follows a CS distribution with (k – c) degrees of freedom where k is the number of non-empty cells and c is the number of estimated parameters (including location and scale parameters and shape parameters) for the distribution + 1. For example, for a three-parameter Weibull distribution, c = 4. Therefore, the hypothesis that the data are from a population with the specified distribution is rejected if ![]() where

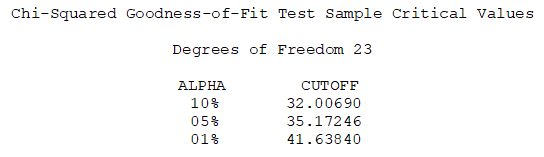

where ![]() is the CS percent point function with (k – c) degrees of freedom and a significance level of α.

is the CS percent point function with (k – c) degrees of freedom and a significance level of α.

Again, as the null hypothesis is such that the data follow some specified distribution, when applied to distributional fitting in Risk Simulator, a low p-value (e.g., less than 0.10, 0.05, or 0.01) indicates a bad fit (the null hypothesis is rejected) while a high p-value indicates a statistically good fit.